Editor’s notice: This submit is a part of the AI Decoded sequence, which demystifies AI by making the know-how extra accessible, and showcases new {hardware}, software program, instruments and accelerations for GeForce RTX PC and NVIDIA RTX workstation customers.

From video games and content material creation apps to software program improvement and productiveness instruments, AI is more and more being built-in into purposes to reinforce consumer experiences and enhance effectivity.

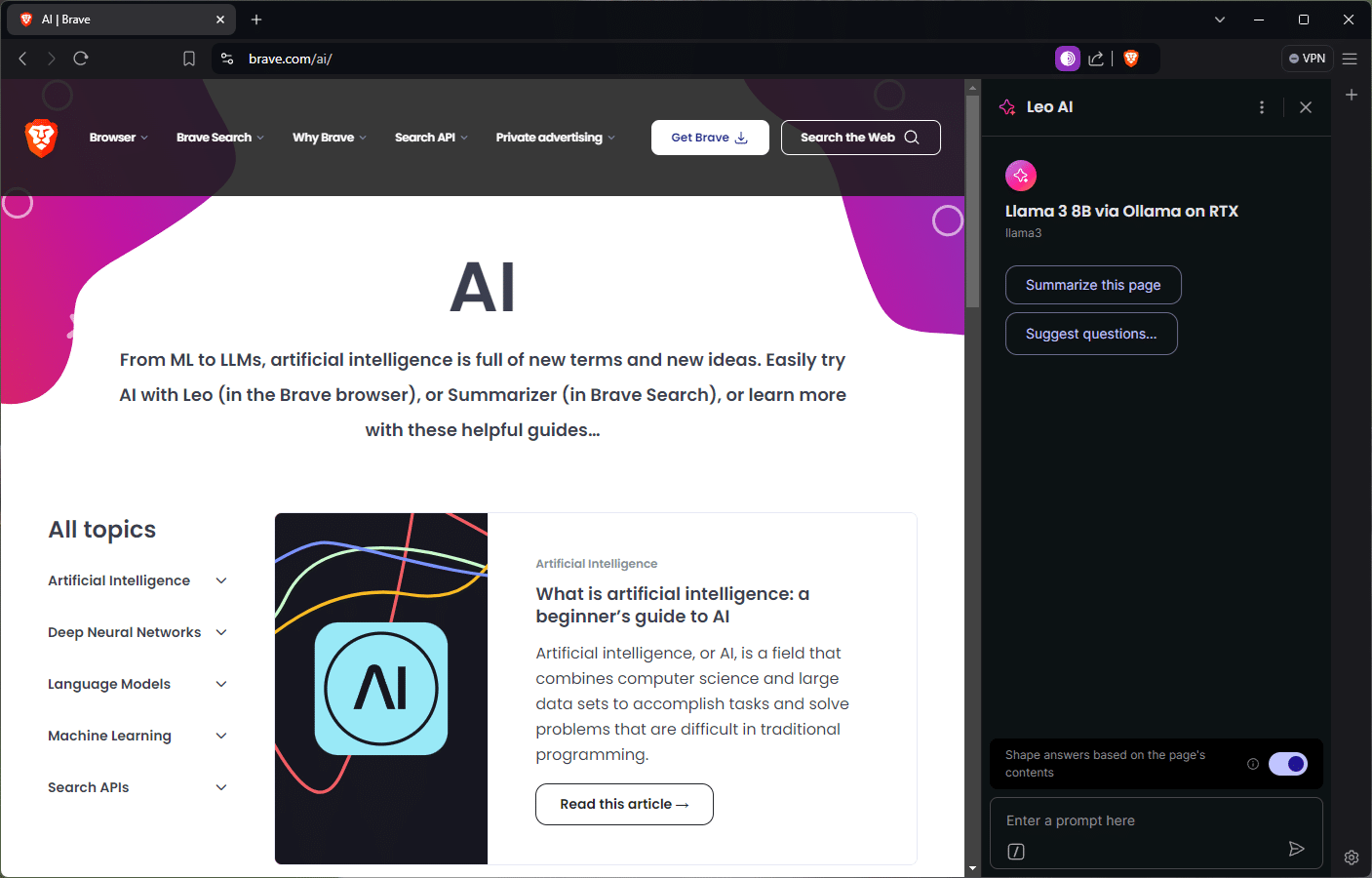

These effectivity boosts prolong to on a regular basis duties, like internet shopping. Courageous, a privacy-focused internet browser, just lately launched a sensible AI assistant known as Leo AI that, along with offering search outcomes, helps customers summarize articles and movies, floor insights from paperwork, reply questions and extra.

The know-how behind Courageous and different AI-powered instruments is a mixture of {hardware}, libraries and ecosystem software program that’s optimized for the distinctive wants of AI.

Why Software program Issues

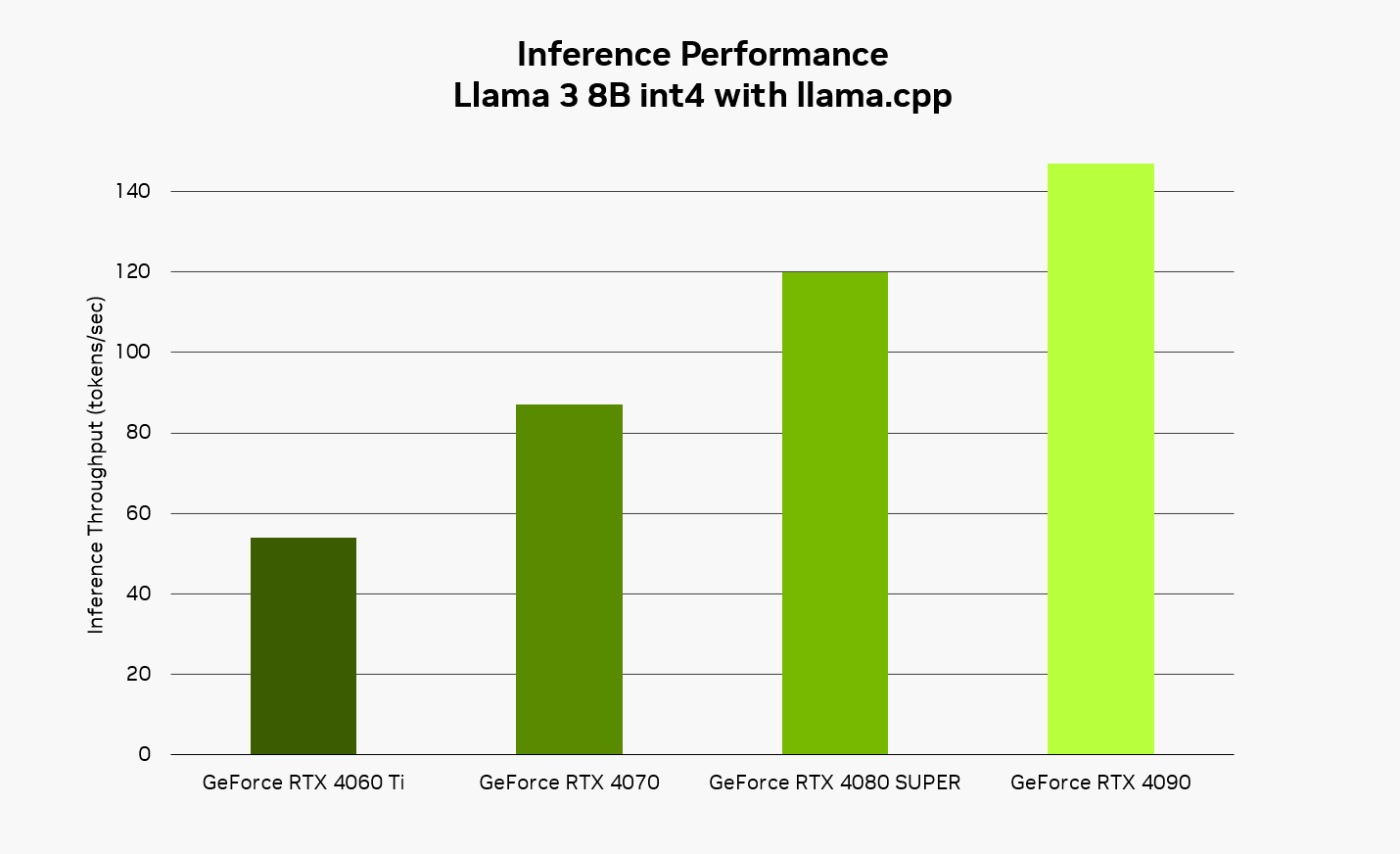

NVIDIA GPUs energy the world’s AI, whether or not operating within the information middle or on an area PC. They include Tensor Cores, that are particularly designed to speed up AI purposes like Leo AI by way of massively parallel quantity crunching — quickly processing the massive variety of calculations wanted for AI concurrently, quite than doing them one by one.

However nice {hardware} solely issues if purposes could make environment friendly use of it. The software program operating on prime of GPUs is simply as essential for delivering the quickest, most responsive AI expertise.

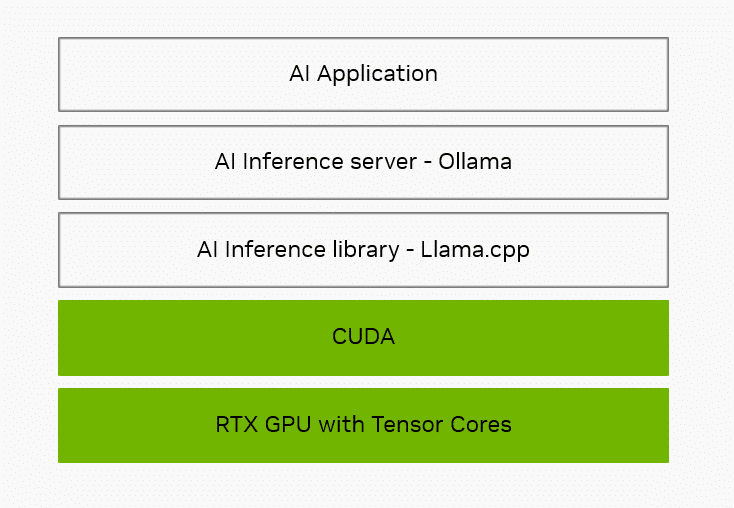

The primary layer is the AI inference library, which acts like a translator that takes requests for frequent AI duties and converts them to particular directions for the {hardware} to run. In style inference libraries embrace NVIDIA TensorRT, Microsoft’s DirectML and the one utilized by Courageous and Leo AI through Ollama, known as llama.cpp.

Llama.cpp is an open-source library and framework. By way of CUDA — the NVIDIA software program utility programming interface that allows builders to optimize for GeForce RTX and NVIDIA RTX GPUs — supplies Tensor Core acceleration for lots of of fashions, together with well-liked giant language fashions (LLMs) like Gemma, Llama 3, Mistral and Phi.

On prime of the inference library, purposes typically use an area inference server to simplify integration. The inference server handles duties like downloading and configuring particular AI fashions in order that the applying doesn’t need to.

Ollama is an open-source challenge that sits on prime of llama.cpp and supplies entry to the library’s options. It helps an ecosystem of purposes that ship native AI capabilities. Throughout all the know-how stack, NVIDIA works to optimize instruments like Ollama for NVIDIA {hardware} to ship quicker, extra responsive AI experiences on RTX.

NVIDIA’s deal with optimization spans all the know-how stack — from {hardware} to system software program to the inference libraries and instruments that allow purposes to ship quicker, extra responsive AI experiences on RTX.

Native vs. Cloud

Courageous’s Leo AI can run within the cloud or regionally on a PC by way of Ollama.

There are a lot of advantages to processing inference utilizing an area mannequin. By not sending prompts to an outdoor server for processing, the expertise is non-public and all the time accessible. As an illustration, Courageous customers can get assist with their funds or medical questions with out sending something to the cloud. Working regionally additionally eliminates the necessity to pay for unrestricted cloud entry. With Ollama, customers can reap the benefits of a greater variety of open-source fashions than most hosted providers, which frequently assist just one or two forms of the identical AI mannequin.

Customers may work together with fashions which have totally different specializations, reminiscent of bilingual fashions, compact-sized fashions, code technology fashions and extra.

RTX allows a quick, responsive expertise when operating AI regionally. Utilizing the Llama 3 8B mannequin with llama.cpp, customers can count on responses as much as 149 tokens per second — or roughly 110 phrases per second. When utilizing Courageous with Leo AI and Ollama, this implies snappier responses to questions, requests for content material summaries and extra.

Get Began With Courageous With Leo AI and Ollama

Putting in Ollama is straightforward — obtain the installer from the challenge’s web site and let it run within the background. From a command immediate, customers can obtain and set up all kinds of supported fashions, then work together with the native mannequin from the command line.

For easy directions on the best way to add native LLM assist through Ollama, learn the firm’s weblog. As soon as configured to level to Ollama, Leo AI will use the regionally hosted LLM for prompts and queries. Customers may change between cloud and native fashions at any time.

Builders can study extra about the best way to use Ollama and llama.cpp within the NVIDIA Technical Weblog.

Generative AI is remodeling gaming, videoconferencing and interactive experiences of every kind. Make sense of what’s new and what’s subsequent by subscribing to the AI Decoded publication.